Dive into the 10-slide analysis of Anthropic’s pitch deck that helped them raise $580M in 2022 from key investors like FTX and Sam Bankman-Fried.

Building your own pitch deck?

Get expert guidance from the team behind $2B+ in investor interest.

Anthropic was founded in January 2021 by siblings Dario Amodei and Daniela Amodei, along with Sam McCandlish, Jack Clark, Tom Brown, and other former OpenAI researchers disillusioned by the rapid commercialisation of powerful AI without adequate safety guardrails. Departing from OpenAI amid internal debates on profit motives versus safety, the team aimed to prioritise building reliable, interpretable, and steerable AI systems aligned with human values, a vision that could greatly benefit from investor-ready pitch deck consulting.

Early days focused on core research into ‘Constitutional AI,’ a novel approach embedding ethical principles directly into model training. With no product yet, they bootstrapped via prior connections, building a 100-person team of top AI talent whilst navigating compute shortages and intense competition from incumbents like OpenAI.

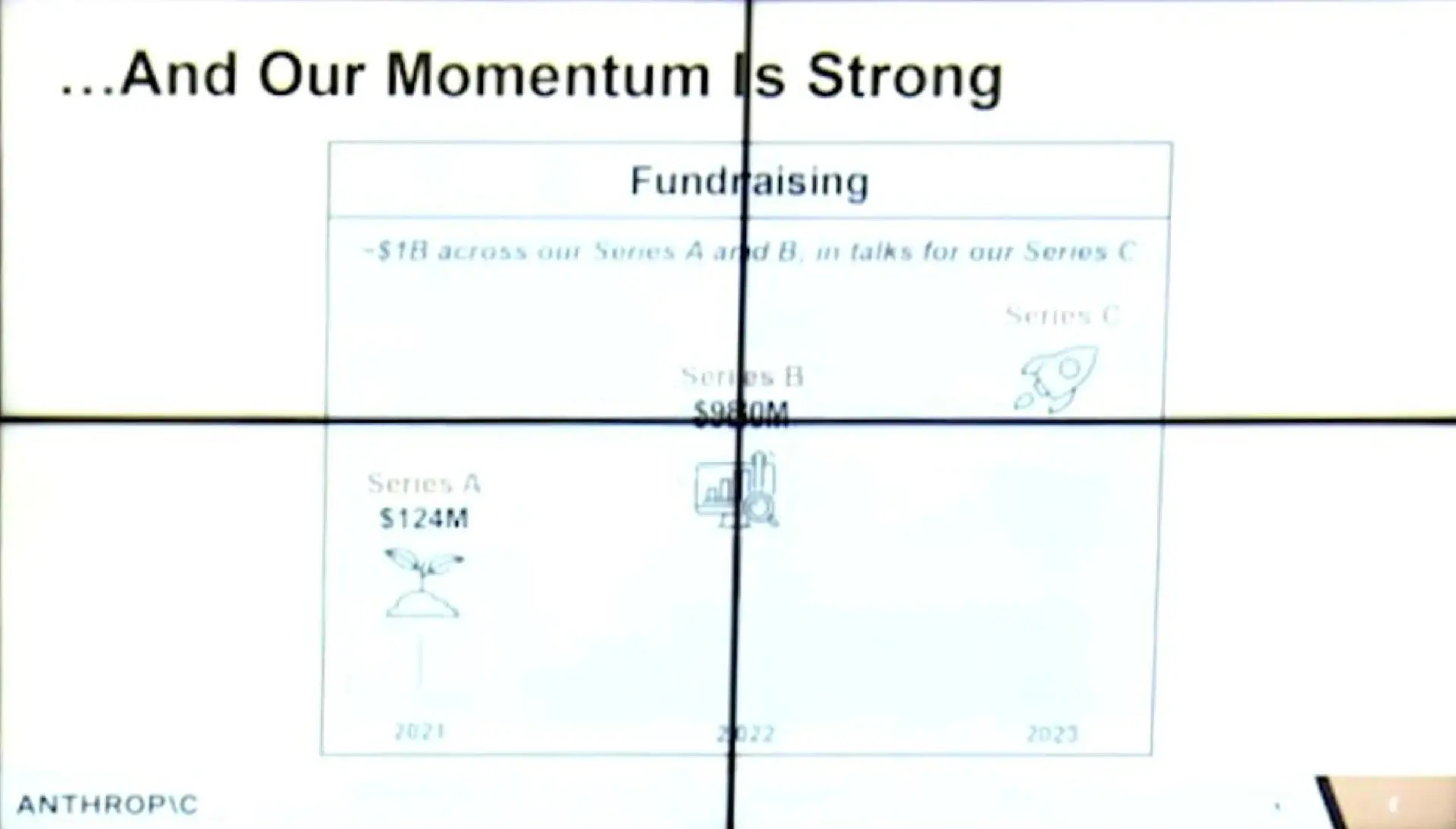

Fundraising began amid the 2022 AI hype cycle, but Anthropic differentiated by pitching safety over raw capability. They secured prior rounds totalling $1B, then targeted Series B with FTX as lead despite crypto volatility. The pitch emphasised team reputation and waitlist traction to de-risk the research-heavy bet.

The deck succeeded, closing $580M at a valuation implying massive future scale. This capital fuelled Claude model development, leading to explosive growth and positioning Anthropic to absorb billions in institutional funding.

The opening slide positions Anthropic with surgical precision as ‘an AI safety and research company’, immediately differentiating from capability-obsessed competitors. The tagline emphasises reliability, interpretability, and steerability—three technical terms that signal deep expertise whilst addressing investor concerns about AI alignment. This isn’t just another ChatGPT clone; it’s a fundamentally different approach to artificial intelligence development.

The visual design is deliberately understated, eschewing Silicon Valley flash for academic credibility. This design choice reinforces the research-first positioning and appeals to institutional investors who prefer substance over style. The clean typography and minimal colour palette suggest methodical thinking and scientific rigour—qualities essential when handling potentially transformative technology.

What investors see: A company that understands the risks of AI development and positions safety as a competitive moat rather than a constraint. The emphasis on ‘steerable’ systems suggests technology that can be controlled and commercialised responsibly, reducing regulatory risk whilst creating enterprise value through trustworthy AI deployment.

This slide tackles the elephant in the room: whilst competitors race to build more powerful models, they’re ignoring fundamental safety challenges. The problem statement highlights critical risks of scaling AI without proper alignment measures, positioning Anthropic as the only team seriously addressing these concerns. By framing safety as an urgent, unsolved technical problem rather than a philosophical concern, they create market space that others are abandoning.

The timing is crucial: presented in late 2022, this slide leverages growing awareness of AI risks following ChatGPT’s release and increasing regulatory scrutiny. The visual likely includes stark contrasts between current AI capabilities and safety measures, demonstrating the growing gap that Anthropic aims to close. This problem framing transforms a perceived weakness (slower capability development) into a strategic advantage.

What investors see: A massive market opportunity disguised as a technical challenge. Smart investors recognise that the first company to solve AI safety will capture enormous enterprise and government contracts from organisations that need AI but can’t risk deployment without proper safeguards. The problem slide positions Anthropic to win the inevitable safety-conscious AI market that regulatory pressure will create.

Constitutional AI represents Anthropic’s core technical innovation: training models to follow explicit principles embedded directly into their behaviour. Unlike traditional approaches that rely on post-hoc filtering or human feedback, this method builds alignment into the model’s foundation. The solution elegantly addresses the interpretability problem—users can understand why an AI makes certain decisions because it follows transparent constitutional principles.

The constitutional framework creates multiple competitive advantages: it makes AI behaviour more predictable for enterprise deployment, reduces liability for companies using the technology, and enables customisation of AI systems for specific use cases or regulatory environments. This isn’t just safer AI—it’s more commercially viable AI for risk-averse organisations that represent the largest market opportunity.

What investors see: A defensible technical moat that solves real business problems. Constitutional AI transforms safety from a cost centre into a value driver, enabling Anthropic to charge premium prices for AI systems that enterprises can actually deploy without regulatory or reputational risk. The approach suggests a licensing model where companies pay for constitutional frameworks tailored to their specific compliance needs.

The timing argument is mathematically precise: AI scaling laws predict exponential capability growth, whilst compute availability has exploded through cloud infrastructure and specialised chips. This creates an urgent window where safety research can still influence the trajectory of AI development before systems become too powerful to control. The slide likely shows exponential curves that make the stakes visceral for investors.

Regulatory pressure adds urgency: governments worldwide are scrambling to understand and control AI development, creating demand for companies that can provide governance-ready solutions. The European Union’s AI Act, growing Congressional hearings, and corporate board-level AI risk discussions all validate Anthropic’s safety-first approach. This isn’t just good timing—it’s inevitable market evolution.

What investors see: A brief window to establish market leadership before the safety advantage becomes table stakes. Smart investors understand that whoever solves AI safety first will capture the lion’s share of institutional AI spending, as enterprises and governments will pay premium prices for systems they can actually trust and deploy at scale without existential risk.

The team slide is Anthropic’s strongest asset: Dario Amodei (CEO) and Daniela Amodei (President) bring OpenAI pedigree with publications on AI safety and scaling laws. Tom Brown co-authored GPT-3, whilst Jack Clark led OpenAI’s policy research. This isn’t just talent acquisition—it’s strategic defection from the market leader by researchers who prioritised safety over speed. Their departure validates Anthropic’s thesis that OpenAI abandoned safety for commercial pressure.

The credibility is unassailable: these researchers literally wrote the papers that enabled modern AI scaling. They understand the technology’s potential and dangers better than anyone, having seen OpenAI’s transformation from safety-focused research lab to commercial juggernaut. Their willingness to start over with safety as the primary goal signals conviction that’s impossible to fake or replicate.

What investors see: The A-team of AI research choosing safety over immediate profits, validating both the technical approach and market opportunity. When the people who built the most successful AI systems to date start a company focused on safety, it signals that safety isn’t just philosophically important—it’s commercially essential. The team slide de-risks the entire investment by proving execution capability.

[Insert image: anthropic-slide-06-traction.webp]

With 2,500 companies on the waitlist despite having no public product, Anthropic demonstrates unprecedented demand for safe AI systems. These aren’t curious consumers—they’re enterprises and institutions that need AI but can’t risk deployment without proper safeguards. Letters of intent and pilot discussions prove that safety isn’t just a nice-to-have feature; it’s a market requirement that existing players aren’t meeting.

The quality of interested parties matters more than quantity: government agencies, Fortune 500 companies, and regulated industries that represent the highest-value AI contracts. These organisations have massive AI budgets but strict compliance requirements that prevent them from using existing solutions. Anthropic’s waitlist represents pent-up demand worth billions in potential contracts.

What investors see: Product-market fit before product launch, validating that safety-first AI addresses real market needs rather than theoretical concerns. The waitlist demonstrates pricing power—companies are willing to wait and pay premium prices for AI they can actually deploy. This traction de-risks the entire business model by proving demand exists at scale.

[Insert image: anthropic-slide-07-safety-benchmarks.webp]

Anthropic’s proprietary safety benchmarks create a defensible moat by establishing the metrics that matter for AI evaluation. Whilst competitors focus on capability benchmarks like coding performance or reasoning tasks, Anthropic measures alignment, truthfulness, and harmlessness—qualities that enterprise customers actually need. By defining the evaluation criteria, they shape the competitive landscape in their favour.

The benchmarks likely show Anthropic’s models outperforming OpenAI, Google, and other competitors on safety measures whilst maintaining competitive capability scores. This positioning is genius: it allows them to claim superiority on the metrics they invented whilst remaining credible on traditional measures. As regulatory pressure increases, these safety benchmarks will become industry standards.

What investors see: A company that’s not just building better AI, but defining how AI quality should be measured. By establishing proprietary safety benchmarks, Anthropic positions itself as the thought leader in AI evaluation, creating a moat that extends beyond technology into market definition. This approach ensures they’ll be well-positioned as safety becomes a competitive requirement.

[Insert image: anthropic-slide-08-market-opportunity.webp]

The market opportunity slide quantifies the massive institutional capital flowing toward safe AI solutions, likely showing TAM in the hundreds of billions as enterprises and governments seek governance-driven AI deployment. The addressable market isn’t just AI adoption—it’s the intersection of AI capability and regulatory compliance, where Anthropic holds unique positioning. This represents the highest-value segment of the AI market.

The regulatory landscape creates market segmentation that favours Anthropic: whilst consumer AI can be deployed with minimal oversight, enterprise and government AI requires extensive safety measures, audit trails, and compliance frameworks. This isn’t a niche market—it’s the premium tier of AI deployment where customers have the largest budgets and highest willingness to pay for quality.

What investors see: A market opportunity that’s both massive and defensible, where Anthropic’s safety-first approach creates natural barriers to competition. The institutional focus means higher average contract values, longer customer lifetime value, and more predictable revenue streams than consumer AI markets. This positions Anthropic to capture disproportionate value from AI adoption.

[Insert image: anthropic-slide-09-roadmap-risks.webp]

The roadmap shows a methodical progression from research to scalable products, with clear milestones for model releases, safety benchmarks, and commercial deployment. Unlike typical startup roadmaps that promise aggressive growth, Anthropic’s timeline emphasises deliberate development with safety gates at each phase. This approach builds investor confidence by showing they understand the technical challenges and won’t rush to market prematurely.

The risk section demonstrates exceptional transparency by openly addressing technical scaling challenges, compute requirements, competitive pressure, and regulatory uncertainty. Rather than hiding risks, Anthropic presents detailed mitigation strategies for each concern. This transparency builds trust and shows sophisticated risk management—essential for investors backing a research-intensive company in a rapidly evolving field.

What investors see: A management team that understands their challenges and has concrete plans to address them, rather than naive optimism that ignores obvious risks. The roadmap balances ambition with realism, whilst the risk discussion demonstrates the analytical rigour that institutional investors require when backing deep tech ventures with long development cycles.

[Insert image: anthropic-slide-10-the-ask.webp]

The $580M ask is precisely tied to specific milestones: model development phases, safety advancement targets, and team expansion goals. The use of proceeds likely breaks down into compute infrastructure (40-50%), talent acquisition (30-35%), and research development (15-20%), with clear metrics for how each dollar drives toward commercial readiness. This level of specificity demonstrates financial discipline and strategic thinking.

The funding amount positions Anthropic to compete directly with OpenAI and Google on compute resources whilst maintaining their safety advantage. The ask includes runway for 18-24 months of intensive development, with clear checkpoints for additional funding based on achievement of technical and commercial milestones. This approach reduces investor risk by tying future funding to measurable progress.

What investors see: A precisely calculated capital requirement that enables Anthropic to achieve market leadership without excessive dilution or runway risk. The milestone-based approach provides clear exit points and reduces execution risk, whilst the focus on infrastructure and talent demonstrates understanding of what’s required to build transformational AI technology at scale.

From insight to investor-ready, in weeks not months.

Apply these lessons to your raise — we’ll build your deck, financial model, and IM together.

Whilst this deck secured one of the most consequential AI investments in history and launched Anthropic toward its current $380B valuation, it reflects the unique circumstances of 2022’s AI landscape where team credibility and safety positioning could overcome traditional fundraising requirements. Modern deep tech companies, even in pre-product stages, face heightened investor expectations around financial modelling, competitive analysis, and go-to-market strategy that this deck strategically omitted, highlighting the need for support from expert pitch deck consultants.

No revenue model, projections, or unit economics despite research focus; modern decks require even pre-revenue startups to show path to $100M+ ARR with CAC/LTV estimates to demonstrate scalability.

Lacks visuals of prototypes, demos, or UI since no product existed; today’s investors expect MVPs or mockups to visualise user experience and validate technical feasibility.

Minimal direct competitor comparison; current best practices demand a 2×2 matrix or positioning map showing unique safety moat versus OpenAI, Google, etc.

No details on sales channels, pricing tiers, or customer acquisition beyond waitlist; essential for proving distribution in crowded AI markets.

Omits ownership structure or dilution details; Series B decks should transparently show investor alignment and runway post-raise.

Absent long-term vision beyond safety research; modern decks include acquisition paths or IPO timelines to contextualise returns.

These omissions reflect the unique market dynamics of 2022, when AI safety positioning and elite team credentials could overcome traditional due diligence requirements. Today’s AI fundraising environment demands more comprehensive business planning, even for research-focused companies. At Projects RH, we help founders balance technical innovation with commercial rigor, ensuring their decks address both the opportunities Anthropic captured and the gaps that modern investors won’t overlook.

Anthropic won by pitching safety flaws others ignored, turning weakness into moat. Founders should identify market blind spots and own them early to stand out.

With no product, bios stole the show—link each member’s expertise to risks. Apply by storytelling credentials as proof-of-execution.

2,500-company waitlist proved demand; use LOIs, pilots, or sign-ups to show pull even pre-launch.

Deck signalled governance readiness for big checks. Tailor narratives to LPs valuing compliance in regulated tech.

Open risk discussion built trust. Founders should preempt concerns with mitigations to accelerate diligence.

Ultra-concise structure forced clarity. Aim for 10-15 slides, ruthless editing for investor attention spans.

Tied to compute boom and regs. Ground your pitch in macroeconomic tailwinds for inevitability.

Ready to raise? Let’s talk.

We’ve helped founders secure $2B+ in investor interest. Your round could be next.

The distance between the Anthropic that presented this deck and the Anthropic that exists today represents one of the most remarkable transformations in business history. In 24 months, they evolved from a $5B research lab with zero revenue and a 2,500-company waitlist to a $380B AI powerhouse generating $14B in annual recurring revenue and serving millions of users worldwide through Claude AI.

The investment mathematics are staggering: FTX’s $580M Series B investment at a $5B post-money valuation has returned 76x in just two years, representing one of the fastest value creation stories in venture capital history. Even accounting for FTX’s subsequent collapse and secondary sales, early investors who held their positions have seen unprecedented returns that validate the power of timing, team, and contrarian positioning in transformational technology markets.

This extraordinary outcome wasn’t guaranteed by the deck alone—it required flawless execution on the Constitutional AI vision, strategic partnerships with cloud providers, and the ability to scale research into commercial products that enterprises actually wanted to deploy. But the pitch deck’s focus on safety as a competitive moat, institutional readiness, and transparent risk management created the foundation for this remarkable transformation from research lab to AI industry leader.

At Projects RH, we help companies across all industries create investor-ready materials that close deals. Our integrated capital raising package ensures consistency across all your investor documentation.

Built in-house for accuracy and investor confidence.

Comprehensive investor documentation following global best practices.

12-slide investor-ready presentation with supporting materials.

High-impact snapshot to capture investor attention fast.

Browse our collection of real pitch deck breakdowns from the world’s most successful companies.

The 2022 Series B deck had exactly 10 slides, focusing on safety positioning, team, and traction without a product demo.

$580M in their Series B round, led by FTX, on top of $1B prior funding, valuing the safety-focused AI research firm highly despite zero revenue.

Contrarian safety-first narrative, elite OpenAI-alum team, quantified waitlist traction (2,500 companies), and institutional capital readiness propelled it from $5B valuation to $380B today.

Yes for pre-product AI/deep tech raises—emulate team emphasis and traction proxies, but adapt for your moat; add financials and GTM for later stages.

Series B in late 2022, after $1B prior raises, using the deck to secure $580M for compute and research amid no revenue but strong demand signals.

Creating an effective pitch deck requires more than following a template — it demands strategic clarity about your value proposition, a deep understanding of your target investors, and rigorous financial modelling to support your narrative. At Projects RH, we combine financial expertise with strategic storytelling to build pitch decks, information memorandums, and financial models that meet the standards of institutional investors worldwide. Our team has generated over USD 2.0 billion in expressions of interest across mining, energy, technology, medtech, and financial services sectors. Schedule a consultation to discuss how we can help position your company for successful capital raising.